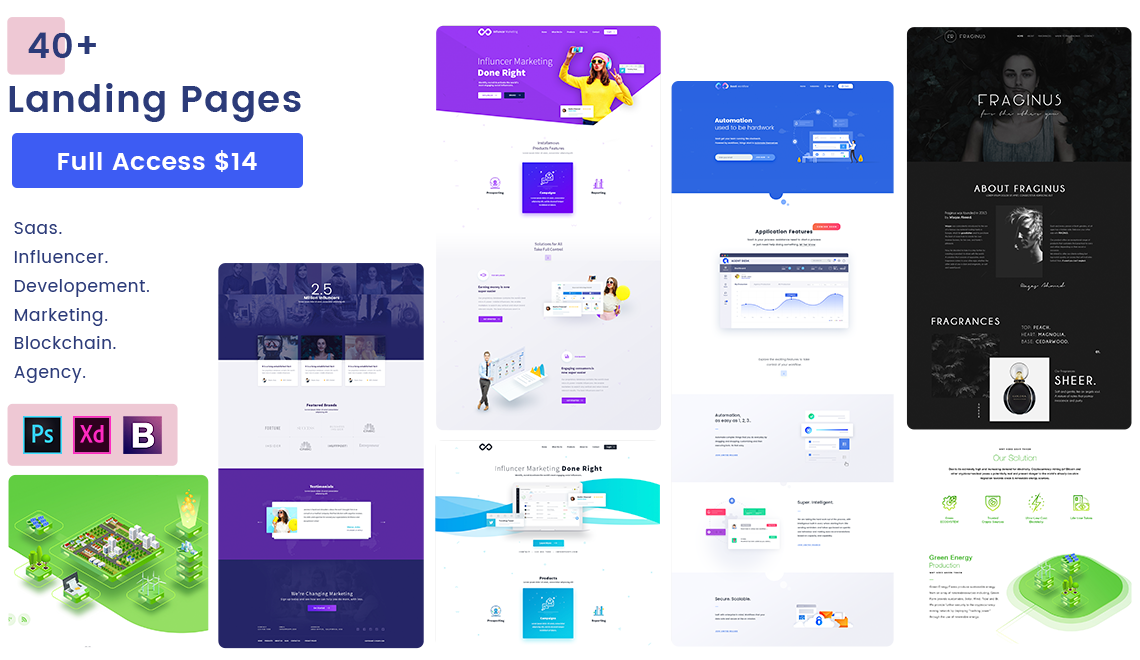

Explore handcrafted top website templates for creative workflow to use in your next project.

Unlimited Access to All Website Templates

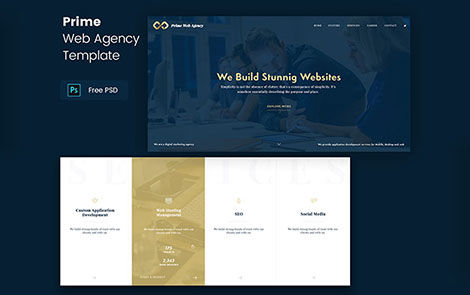

Custom website templates by top designers for Saas, Marketing, eCommerce, Blockchain, Agency, Restaurant, Food, Photography.

for just $14